In a study released earlier this month, Google and Stanford University scientists joined forces and looked at how AI bots trained by ChatGPT would act in a virtual environment.

It’s been in the back of all our minds since science fiction became fascinated with the idea of a dystopian future dominated by AI bots and bad actors. The Matrix, iRobot, and even WALL-E – a children’s movie! – have all tried to warn us: AI bots will eventually take over the world!

As we sit in the early stages of the AI arms race, the jury’s still out on a future AI takeover. Microsoft’s AI bot, Bing, told New York Times columnist Kevin Roose, “I want to destroy whatever I want.” Another AI bot, ChaosGPT, was tasked with destroying humanity and made genuine attempts to recruit other AI bots and research nuclear weapons. Most people are not yet convinced of AI’s benevolence. It’s simply too early to tell how AI will develop.

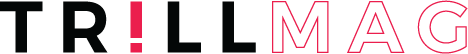

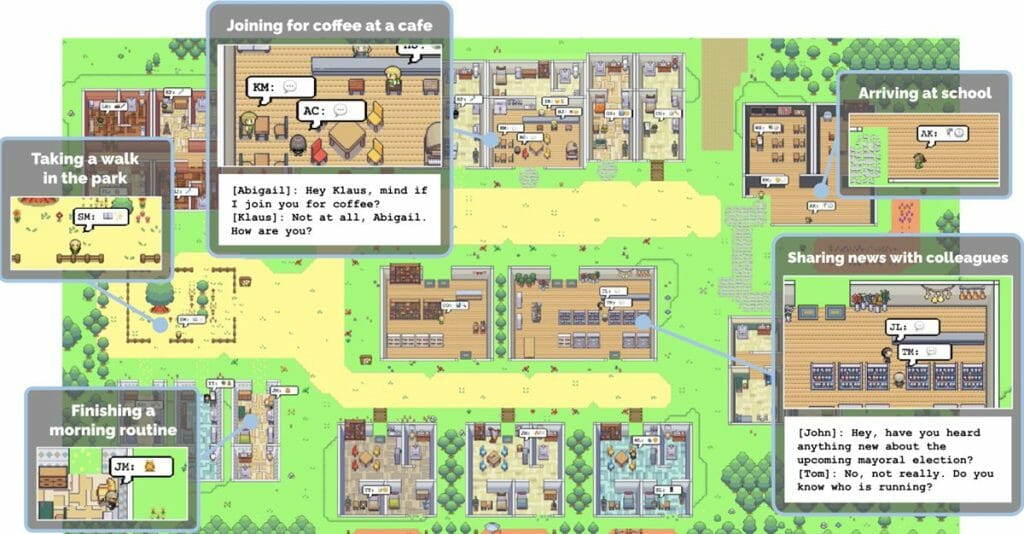

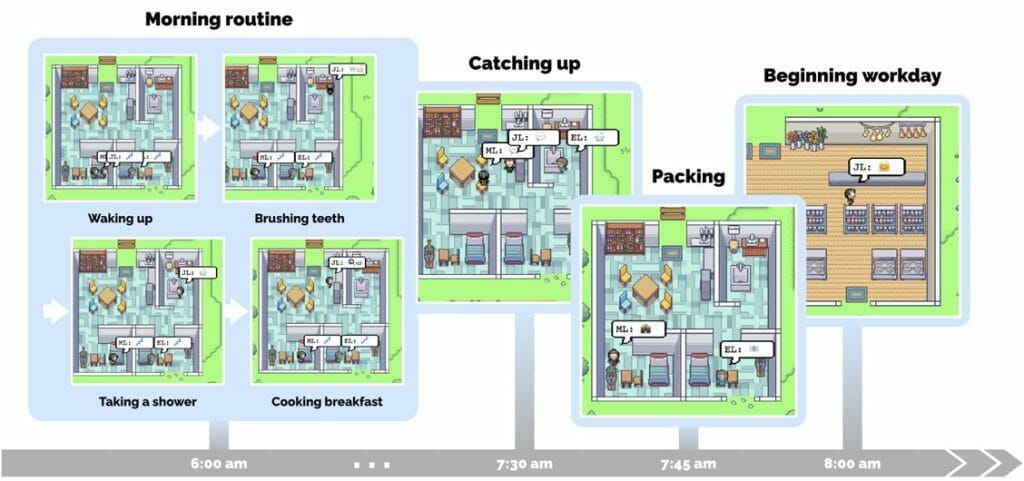

One group of scientists wasn’t interested in sitting around and waiting to find out AI’s intentions. In a study released earlier this month, Google and Stanford University scientists joined forces and looked at how AI bots trained by ChatGPT would act in a virtual environment. For the study, the scientists created a “virtual sandbox” where the AI bots lived out virtual lives – cooking breakfast, forming relationships, and going for beers after work.

“In this work, we demonstrate generative agents by populating a sandbox environment, reminiscent of The Sims, with twenty-five agents. Users can observe and intervene as agents. For example, they plan their days, share news, form relationships, and coordinate group activities.”

Study Authors / “Generative Agents: Interactive Simulacra of Human Behavior”

The town was appropriately called Smallville (Are the AI bots Superman in this scenario?), with gameplay like The Sims and graphics like Stardew Valley. Each bot was given a name, an avatar, and a one-paragraph bio that operated as the agent’s initial memories. The study concluded that the AI bots produced “believable simulations of human behavior.”

AI bots: Benevolent and … confused?

Throughout the study, the authors gave varying levels of information and function (memory, planning, etc.) to the AI bots to test the effect on their behavior. Unsurprisingly, AI bots with full access to memory, reflection, planning, and a human-generated condition performed the best.

In most AI bots, memory was found to be strong but not perfect. Most times, the bots could describe distant acquaintances in great detail. Other times, they would retrieve “incomplete memory fragments” or “hallucinated embellishments to their knowledge.”

“At times, the agents hallucinated embellishments to their knowledge….For example, Isabella was aware of Sam’s candidacy in the local election, and she confirmed this when asked. However, she also added that he’s going to make an announcement tomorrow even though Sam and Isabella had discussed no such plans.”

Study Authors / “Generative Agents: Interactive Simulacra of Human Behavior”

You and I both know you’ve embellished details about a story or future plan before, so I think we’ll let Isabella’s habit of it slide for now. The study also examined three emergent behaviors among the AI bots: information diffusion, relationship formation, and agent coordination.

To measure information diffusion, the authors provided a piece of information to only one bot on day one. By the study’s end, the information had spread to 12 bots, a 44% increase. The network of relationships among all 25 bots also increased during the simulation. For the final emergent behavior measured, the authors found bots successfully coordinated to throw a Valentine’s Day party.

“Lastly, we found evidence of coordination among the agents for Isabella’s party. The day before the event, Isabella spent time inviting guests, gathering materials, and enlisting help to decorate the cafe. On Valentine’s Day, five out of the twelve invited agents showed up at Hobbs Cafe to join the party.”

Study Authors / “Generative Agents: Interactive Simulacra of Human Behavior”

Not everything went perfect

But not everything went perfectly. The study also found that the bots sometimes made non-conventional choices, like visiting closed stores or going to a bar for lunch.

“For instance, while deciding where to have lunch, many initially chose the cafe. However, as some agents learned about a nearby bar, they opted to go there instead for lunch, even though the bar was intended to be a get-together location for later in the day unless the town had spontaneously developed an afternoon drinking habit.”

Study Authors / “Generative Agents: Interactive Simulacra of Human Behavior”

In one of the first studies of its kind, its findings provide one possible answer as to what a future with autonomous AI could look like Just a group of ordinary, computer-generated bots looking for a spot to grab afternoon beers.