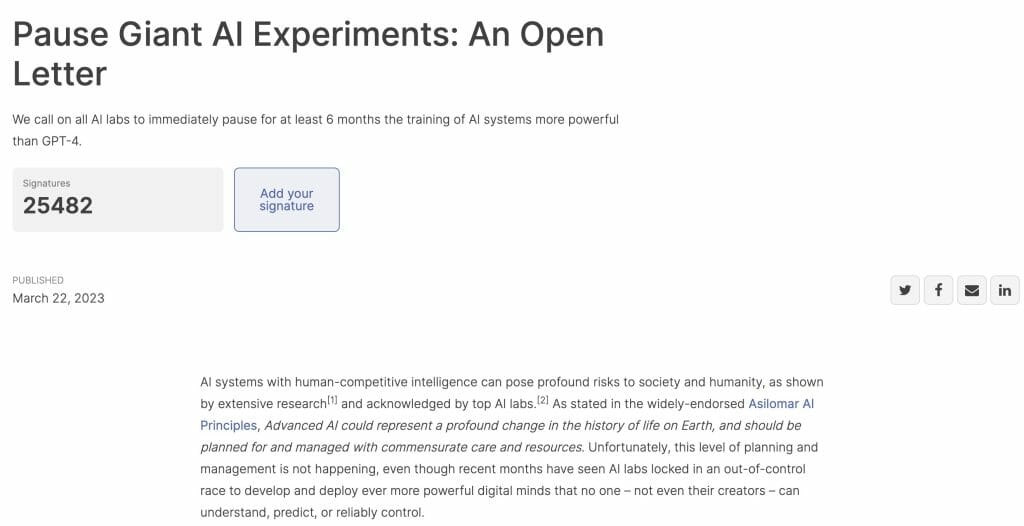

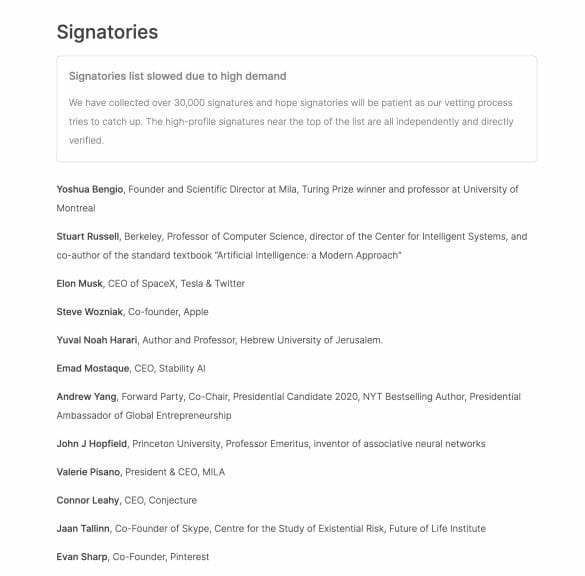

Elon Musk and Apple co-founder Steve Wozniak have signed an open letter calling for an AI ban on giant AI experiments following the GPT-4 mania. Over 30000 people signed the Future of Life Institute letter published on March 22, 2023.

The open letter for AI ban

According to the letter, “recent months have seen AI labs locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one – not even their creators can understand, predict, or reliably control.” It also said it is against the widely-endorsed Asilomar AI Principles.

Advanced AI could represent a profound change in the history of life on Earth, and should be planned for and managed with commensurate care and resources.

Future of life Institute

The letter points out that “contemporary AI systems are now becoming human-competitive at general tasks,” and there are a bunch of serious questions we need to ask ourselves. It concludes that “powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable.”

The signatories call on “all AI labs to immediately pause for at least six months the training of AI systems more powerful than GPT-4” and urged “AI labs and independent experts (should) use this pause to jointly develop and implement a set of shared safety protocols for advanced AI design and development…”

GPT-4 takes over the world

The letter has come into being after the explosive breakthroughs in artificial intelligence for the past few months. American artificial intelligence company Open AI, its ground-breaking chatbot ChatGPT and its latest version language model system GPT-4 have been at the storm’s center.

ChatGPT is an AI chatbot application that uses GPT-3 and GPT-4’s language models that people can interact with. It is easy to get confused with all the model names. But if you compare ChatGPT to a car, GPT -3 or GPT-4 would be the engine that powers it.

Despite controversies, businesses ranging from Microsoft to Morgan Stanley have rushed to integrate GPT-4 into their services. New ways to use AI emerge on Twitter every other minute.

But are you aware of the risks we might put ourselves at when enjoying the convenience and benefits of the latest development of AI?

The AI risks we are facing

Some known defects of the GPT AI model include Hallucination, automation bias (overreliance), susceptibility to jailbreaks, bias reinforcement (sycophancy), and scalability.

According to Betanews, hallucination points to the GPT4’s “tendency to make up facts, to double-down on incorrect information, and to perform tasks incorrectly.” The model is said to hallucinate in more convincing and believable ways than previous models. It assumes an authoritative tone or is presented in the context of accurate and detailed information, “increasing the risk of overreliance.”

GPT-4 is “extremely susceptible to users tricking the model into circumventing the safeguards OpenAI has built for it.” Jailbreaking tests with the model have also flooded social media platforms. Also, refer to Prof. Michal Kosinski’s AI escape experiment on the AI.

Users have found GPT-4 demonstrates certain societal biases and worldviews and “tendencies to do things like repeat back a dialog user’s preferred answer,” also called “sycophancy.”

As users become more reliant on the model, GPT-4’s mistakes will become harder and harder for average human beings to detect. People will become “less likely to challenge or verify the model’s responses.”

Meanwhile, Italy has become the first country to announce a ban on ChatGPT for breaching data privacy rules. Europe is also said to be working on AI-related legislation. But Bill Gates, among all people, has claimed that an AI ban “won’t solve the challenges.”

It would be better to focus on how best to use the developments in AI, as it was hard to understand how a pause could work globally, the technologist-turned-philanthropist reportedly said.